Mooney Lab

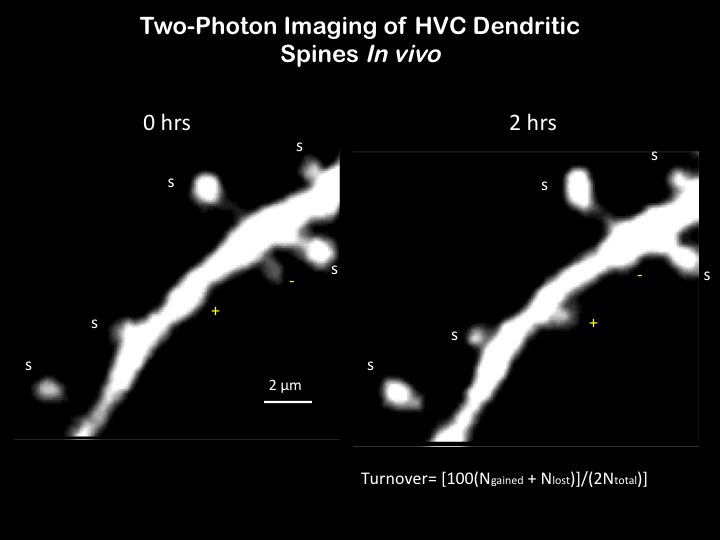

We study the neurobiology of hearing and communication, with special emphasis on the neural mechanisms of vocal learning, production and perception. Our approaches include in vivo multi photon imaging of neurons, optogenetics, and intracellular and extracellular recordings from freely behaving songbirds and mice.

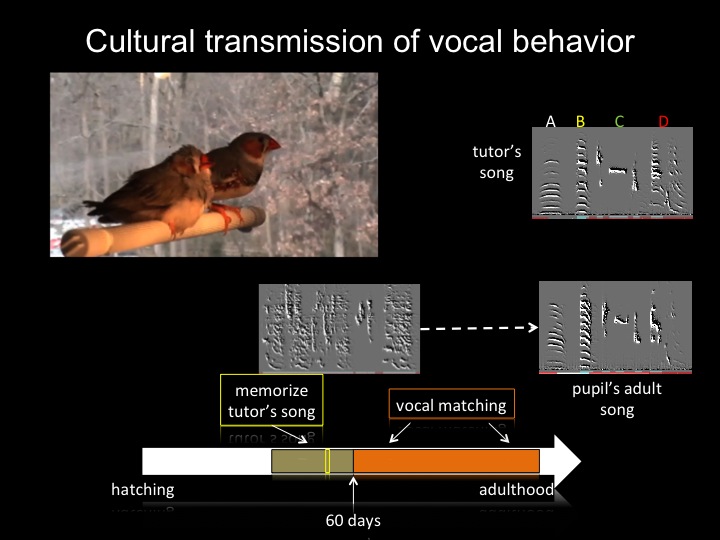

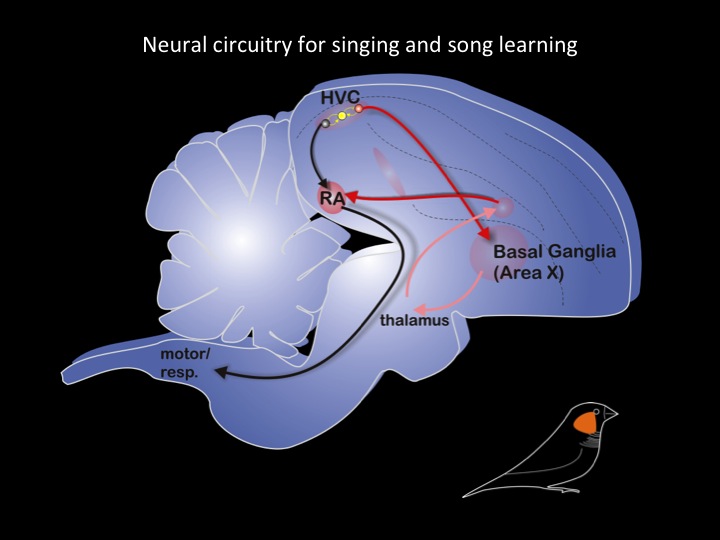

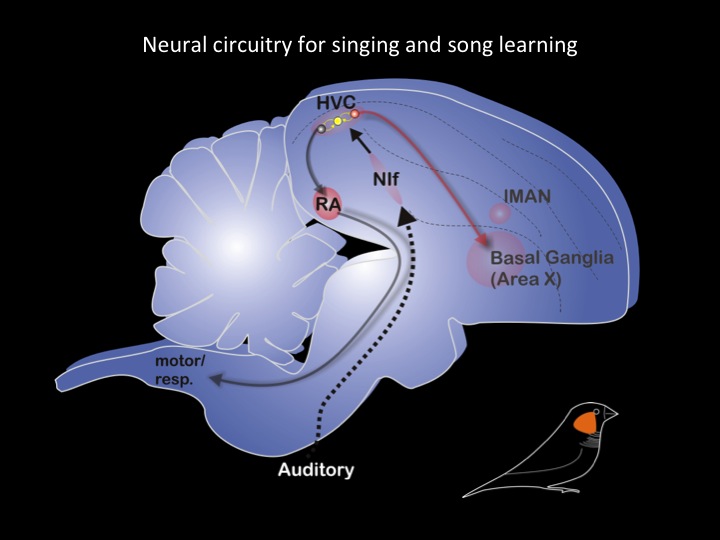

Our research aims to identify the neural substrates for communication. We use both songbird and rodents to achieve these aims. Songbirds are one of the few non-human animals that learn to vocalize and serve as the preeminent model in which to identify neural mechanisms for vocal learning. The songbird is ideal for this purpose because of its well-described capacity to vocally imitate the songs of other birds, and because its brain has a constellation of discrete, interconnected brain regions (i.e., song control nuclei, referred to collectively as the song system) that function in the patterning, perception, learning and maintenance of song.

There are two major foci to our songbird studies: elucidating how and where auditory and motor information about learned vocalizations is encoded in the brain; identifying the mechanisms via which auditory experience modifies vocal output, as occurs during sensitive periods for vocal learning. We also study the neurobiology of audition and vocalization in mice. Although mice do not appear to be vocal learners, they do vocalize and produce other sounds as a consequence of their movements. A major focus of our current research is to understand how vocal motor and auditory regions of the brain interact during vocalizations and other sound-producing behaviors to help the organism distinguish self-generated sounds from other sounds in the environment. We are using both wild type and genetically modified mice to identify the central neural mechanisms that underlie this form of sensorimotor integration.

We use a wide range of techniques in our research, including in vivo multiphoton neuronal imaging, chronic recording of neural activity in freely behaving animals, in vivo and in vitro intracellular recordings from identified neurons, and manipulation of neuronal activity using either electrical microstimulation, focal cooling or optogenetic methods. Our group also has extensive experience with viral transgenic methods and with behavioral analysis, especially in quantifying acoustic features of vocalizations. Together, these methods provide a broad technical approach to understanding how the brain harnesses sensory information to adaptively modify behavior.

Mooney Publications

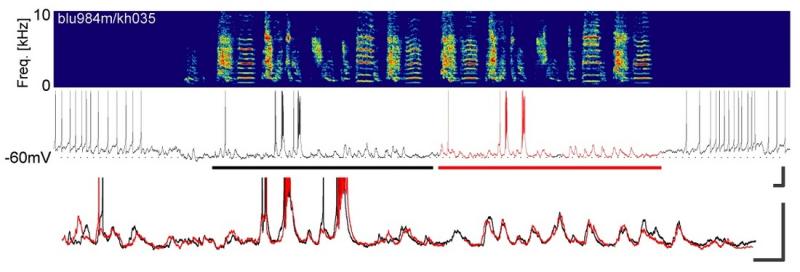

Singh Alvarado, Jonnathan, Jack Goffinet, Valerie Michael, William Liberti, Jordan Hatfield, Timothy Gardner, John Pearson, and Richard Mooney. “Neural dynamics underlying birdsong practice and performance.” Nature 599, no. 7886 (November 2021): 635–39. https://doi.org/10.1038/s41586-021-04004-1.

Mooney, Richard, Mor Ben-Tov, and Fabiola Duarte. “Data from: A neural hub that coordinates learned and innate courtship behaviors,” July 20, 2021. https://doi.org/10.7924/r4fq9z00b.

Goffinet, Jack, Samuel Brudner, Richard Mooney, and John Pearson. “Low-dimensional learned feature spaces quantify individual and group differences in vocal repertoires.” Elife 10 (May 14, 2021). https://doi.org/10.7554/eLife.67855.

Michael, Valerie, Jack Goffinet, John Pearson, Fan Wang, Katherine Tschida, and Richard Mooney. “Circuit and synaptic organization of forebrain-to-midbrain pathways that promote and suppress vocalization.” Elife 9 (December 29, 2020). https://doi.org/10.7554/eLife.63493.

Mooney, Richard, and Michael Brecht. “Editorial overview: Neurobiology of behavior.” Curr Opin Neurobiol 60 (February 2020): iii–v. https://doi.org/10.1016/j.conb.2020.01.001.

Nieder, Andreas, and Richard Mooney. “The neurobiology of innate, volitional and learned vocalizations in mammals and birds.” Philos Trans R Soc Lond B Biol Sci 375, no. 1789 (January 6, 2020): 20190054. https://doi.org/10.1098/rstb.2019.0054.

Kearney, Matthew Gene, Timothy L. Warren, Erin Hisey, Jiaxuan Qi, and Richard Mooney. “Discrete Evaluative and Premotor Circuits Enable Vocal Learning in Songbirds.” Neuron 104, no. 3 (November 6, 2019): 559-575.e6. https://doi.org/10.1016/j.neuron.2019.07.025.

Tschida, Katherine, Valerie Michael, Jun Takatoh, Bao-Xia Han, Shengli Zhao, Katsuyasu Sakurai, Richard Mooney, and Fan Wang. “A Specialized Neural Circuit Gates Social Vocalizations in the Mouse.” Neuron 103, no. 3 (August 7, 2019): 459-472.e4. https://doi.org/10.1016/j.neuron.2019.05.025.

Tanaka, Masashi, Fangmiao Sun, Yulong Li, and Richard Mooney. “A mesocortical dopamine circuit enables the cultural transmission of vocal behaviour.” Nature 563, no. 7729 (November 2018): 117–20. https://doi.org/10.1038/s41586-018-0636-7.

Schneider, David M., Janani Sundararajan, and Richard Mooney. “A cortical filter that learns to suppress the acoustic consequences of movement.” Nature 561, no. 7723 (September 2018): 391–95. https://doi.org/10.1038/s41586-018-0520-5.

Mooney Projects

1R01-DC013826-01A1 (Mooney, PI)

Motor Modulation of Auditory Processing

The goal of this project is to determine the circuit mechanisms by which motor-related signals influence auditory cortical processing.

5R01-DC002524-20 (Mooney, PI)

Sensorimotor Integration for Learned Behaviors

The overarching aim of this proposal is to use high resolution imaging methods combined with genetic and physiological methods to study how experience of a behavioral model and sensory feedback affect the properties of neural circuits essential to the learning and execution of complex, culturally transmitted motor sequences. *No Cost Extension

5R01-NS077986-03 (Wang, PI)

Pre-motor Neural Circuits for Exploratory Movement

This proposal uses newly developed monosynaptic rabies-virus-based trans-synaptic tracing methods

combined with electrophysiology and optogenetics to precisely identify and characterize the premotor neural

circuits that control exploratory “active touch” movements in mice.

Role: Co-Investigator

NSF Proposal 1354962 (Mooney, PI)

Neural Codes for Vocal Sequences

The goal of this project is to determine how auditory and motor representations of learned vocal sequences are encoded in a sensorimotor structure important to learned vocalizations in songbirds.

Lab Members

Alumni

Samuel Brudner

Former graduate student in Duke Neurobiology; current postdoctoral associate in Thierry Emonet's lab in the Department of Molecular, Cellular and Developmental Biology at Yale University

Rene Carter

Former research technician; current graduate student in Duke Neurobiology

Matt Kearney

Former graduate student

Anders Nelson

Former postdoctoral fellow

Janini Sundararajan

Current postdoctoral research associate, New York University

Katie Tschida

Former research associate

Mooney Images

Life in the Mooney Lab